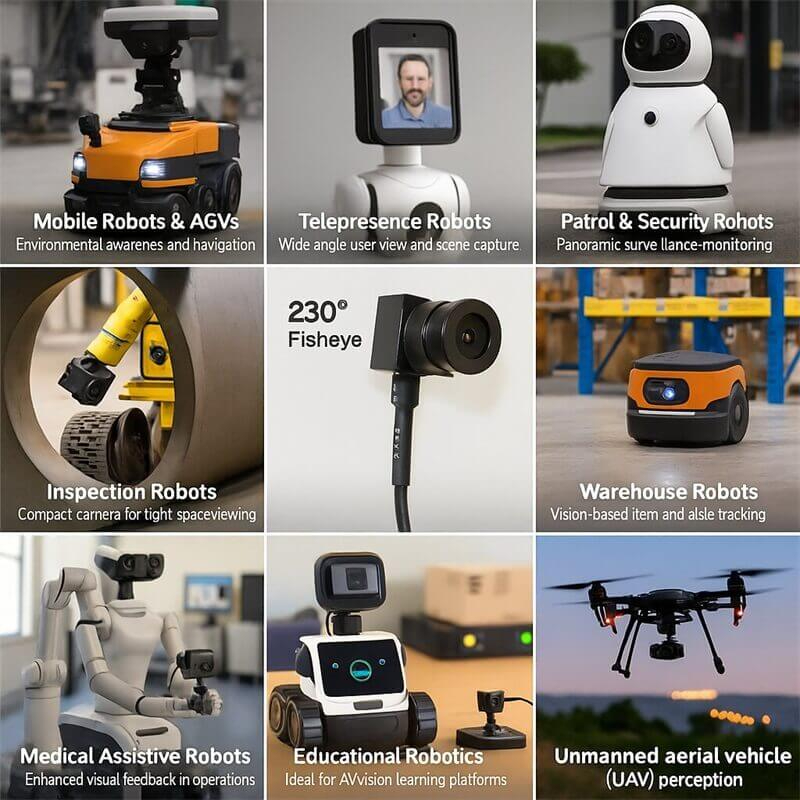

In robotic embedded vision systems, hardware selection often focuses on explicit parameters such as resolution, frame rate, and dynamic range, while overlooking power consumption—an “invisible metric.” In reality, low-power USB modules are far more than just “energy-saving designs”; they are a core factor determining battery life, operational stability, and environmental adaptability. For battery-powered devices such as collaborative robots, mobile robots, and drones, low-power capability can even be the key enabler for successful deployment.

The endurance of mobile robots directly affects their commercial value, and vision systems—often accounting for 20–30% of total power draw—are among the largest energy consumers.

A logistics AGV manufacturer’s tests showed: robots equipped with traditional USB cameras (3.5 W) could only operate for 4.2 hours per charge. After switching to low-power USB modules (0.8 W), operating time increased to 6.8 hours—a 61.9% improvement.

This gain is even more critical in inspection robots. Substation inspection robots must autonomously move among high-voltage equipment for 8+ hours. If their infrared thermal imaging USB modules use low-power designs (e.g., dynamically lowering frame rate to 5 fps in idle mode), daily charging frequency can drop from 2 times to 1. In one power grid case, robots equipped with low-power vision modules reduced monthly O&M costs by 37%, primarily due to less downtime for charging and slower battery degradation.

In consumer scenarios, household cleaning robots with navigation systems consuming over 1 W can see battery cycle life fall from 500 cycles to 350. Using sub-0.5 W low-power designs not only extends each cleaning session but also increases the robot’s lifespan from 2 years to over 3.

Robotic embedded systems often have enclosed spaces (e.g., at the end of a robotic arm or inside a drone gimbal) with little to no active cooling. Vision module power draw directly impacts overall thermal management.

A cobot manufacturer encountered a typical problem: when its robotic arm ran vision-guided picking tasks for 3 continuous hours, traditional USB cameras (2.8 W) raised nearby circuitry temperatures to 65°C, triggering CPU throttling and reducing picking accuracy from ±0.1 mm to ±0.3 mm.

Low-power USB modules address this with three approaches:

Tests show that USB modules with <1 W power draw, in sealed environments, can run for 8 hours with surface temperatures only 7°C above ambient—requiring no extra cooling.

In medical robotics, low power is even more critical. For example, endoscopic USB modules in surgical robots, if too hot, could affect precision mechanics or pose risks to human tissue. One neurosurgical robot uses a 0.6 W low-power USB vision module that keeps lens housing temperature below 37°C during 2-hour surgeries, meeting medical safety standards.

While USB’s plug-and-play nature is inherently integration-friendly, high-power modules often require separate power lines, increasing complexity. Traditional industrial cameras frequently need DC 12V power, but low-power USB modules can draw directly from USB 2.0 (5V/500mA), achieving “one-cable data + power”.

In agricultural plant-protection drones, this simplification is crucial. A multispectral vision system with 4 low-power USB modules (total 2.4 W) can be powered via the onboard USB hub without modifying the battery management system. One 20 kg-class drone reduced wiring weight by 0.3 kg, extending flight time from 15 to 18 minutes—boosting coverage area by 20% per sortie.

Modular designs also improve maintenance. In an automotive welding workshop, low-power USB camera modules with hot-swap capability reduced average maintenance time from 45 minutes (industrial camera) to 8 minutes—avoiding full line shutdowns. This saved 30 hours of downtime annually, worth about ¥1.5M in production value.

In power-starved environments, low-power USB modules may be the only option. Polar research robots working at –40°C may benefit from heat generation, but high-power modules drain limited battery reserves quickly. A polar expedition team’s low-power USB vision system (0.7 W) with thermal insulation operated for 72 continuous hours during Antarctic polar night crevasse monitoring.

In space robotics, low power is a hard requirement. For example, each 1 W reduction in an ISS exterior inspection robot’s vision system saves ~3 kg of fuel over a 6-month mission. NASA’s latest Robonaut 2 prototype uses a 0.5 W USB vision module—one-fifth the previous generation’s consumption—making extended space missions feasible.

New low-power USB modules go beyond hardware optimization, using AI algorithms for dynamic power management. A USB camera with edge computing can adjust resolution and frame rate based on scene complexity:

This reduces warehouse robot vision system average power by 40%.

The spread of USB 3.2 Gen2x1, with 900mA (4.5 W) power delivery, enables low-power modules with small NPUs to run AI inference locally. One logistics robot using this architecture increased barcode recognition performance while adding only 0.5 W of power draw—reducing main CPU workload by 80% and overall system power.

Looking ahead, with 3D-stacked chips and new optoelectronic materials, low-power USB modules could achieve microwatt standby and sub-100 mW active operation. At <0.1 W, energy harvesting (e.g., from robot motion) could allow perpetual operation—completely reshaping embedded vision design.

From data center inspection robots to home companion robots, from deep-sea exploration to space missions, low-power USB modules are powering the big vision with small energy. As one robotics engineer put it:

“We spent three years optimizing algorithms to gain 1% recognition accuracy—only to find that switching to a low-power camera improved system uptime by 30%… because the robot could finally ‘keep its eyes open’ all day.”

Expert FAQ: Engineering "Eye-in-Hand" Visual Systems (2026 Edition)

Q1: "What are the primary advantages of an Eye-in-Hand calibration architecture versus Eye-to-Hand for 6-DoF robotic arms?"

A: Eye-in-Hand architecture (camera mounted on the end-effector) minimizes occlusion issues, as the camera's view is never blocked by the robot's own arm. Critically, it provides higher precision at the grasping point because the visual resolution effectively increases as the gripper approaches the target object. This is the preferred architecture for fine manipulation, assembly tasks, and visual servoing in unstructured environments.

Q2: "Is a Global Shutter sensor mandatory for visual servoing in high-speed pick-and-place applications?"

A: Yes. For dynamic visual servoing where the robot is in continuous motion, Global Shutter is an absolute requirement. Rolling Shutter sensors introduce "Jello Effects" (geometric skew) when the camera moves, which corrupts the coordinate transformation matrix (TCP to World) used by the controller. Using a rolling shutter introduces unpredictable latency and positioning errors in the feedback loop.

Q3: "What are the standard form factor constraints for integrating embedded vision into humanoid fingertips or cobot grippers?"

A: Space is the limiting factor. The industry standard for embedded end-effector vision is now the 15x15mm or smaller PCBA footprint. Engineers must prioritize z-height (thickness) to prevent the camera from interfering with the grasping mechanism. Specialized ODMs like goobuy have developed ultra-compact 15mm modules specifically for this niche, integrating lens mounts and USB interfaces into a profile thin enough to slide inside a standard aluminum extrusion or 3D-printed phalanx.

Q4: "How do you mitigate USB cable fatigue and signal loss in robotic end-effectors with continuous wrist rotation?"

A: Standard consumer USB cables fail rapidly due to torsion and bending fatigue. The engineering standard is to use High-Flex ("Drag Chain Rated") cables tested for 5-10 million cycles. Furthermore, signal integrity over long distances (arm length + controller distance) often requires active repeaters or transitioning to a SerDes (GMSL2/FPD-Link) interface to ensure reliable data transmission through the robot's internal slip rings.

Q5: "How to solve minimum focusing distance (MOD) issues when the camera is mounted less than 50mm from the grasping target?"

A: Standard M12 lenses often have a MOD of 20cm, resulting in blurry images during the final grasping phase. For Eye-in-Hand systems, you must specify lenses with Macro capability or use liquid lens technology for fast autofocus. A fixed-focus lens set to a hyperfocal distance of 5cm-infinity is the most robust and cost-effective solution for industrial grippers.

This Article is updated in March 14th, 2026